ConnectALL has had the privilege to serve organizations around the globe with our award-winning Value Stream Integration platform. We have helped companies solve simple problems, from synchronizing bugs from Azure DevOps to Micro focus ALM, to much more complex digital transformation efforts involving mergers, acquisitions, and spin-offs of business units. With that, our professional services team has helped these companies visualize their tools, people, and processes across those software value streams – while providing actionable recommendations to improve both software delivery speed and quality.

Although every organization is unique, we have recorded and documented a common scenario faced by organizations running ServiceNow, Micro Focus Application Lifecycle Management (formerly HPEQC), and Atlassian JIRA that we will share in this blog post. A common issue that plagues most QA and Software Testing Leaders is the lack of traceability from software changes to the originating requirements. In fact, the original requirement is often nowhere to be found, nor is it even traced to the downstream work items that have spawned from it. Let us look at an example.

One company we worked with extensively was using HPE Quality Center (now Micro Focus ALM) to manage their manual testing efforts and defect tracking for an SAP rollout. They had a project charter, hired a world-class Solution Integrator (SI) to assist, and set up Microsoft SharePoint as their tool-of-choice for tracking change requests. From the outside looking in – this company had all the right pieces to the puzzle. From the inside, however, it was apparent that the process was broken from the onset. Here is an example dialogue between a ConnectALL Solution Consultant and several key members of the organization we were consulting with at the time. In this example, I was the Senior Solution Consultant tasked with helping our customer transform their software value stream:

1) Question (Johnathan): Where are you tracking the approved changes to baseline requirements?

Answer (Our Customer): Our Solution Integrator suggested that we use a SharePoint list to track any changes approved during the implementation. In that SharePoint list, we have a unique identifier, the description, and attach the relevant approval signatures.

2) Question (Johnathan): Are you associating the Change that you log in SharePoint to the original requirement?

Answer (Our Customer): Well, yes and no. We are attaching the original design document to the change within SharePoint. Originally, we started using SAP Solution Manager (SolMan) to baseline our requirements. About 3 months into the project, our new Project Manager decided to go in a different direction, so we are now uploading all the Design docs to SharePoint.

3) Question (Johnathan): Okay, let me ask a different question. How does your Test Manager scope their regression testing cycles? Do they manually peck through this SharePoint list and attempt to understand what existing functionality might have been impacted by a change?

Answer (Our Customer): That is a good question. I guess we just trust that our Test Management team understands how to wrap an effective test strategy around the existing process. Admittedly, it is not an ideal scenario, but we’ve hired the best and brightest to ensure the highest level of software quality.

4) Question (Johnathan): Do you have a post go-live strategy of how to handle on-going changes, enhancements, break and bug fixes?

Answer (Our Customer): We are nearly 18 months out from the Minimum Viable Product (MVP) released into our beta group hands. With that, we have not given much consideration to the ‘steady state’ plan after the product is in the hands of our customers.

In the brief example illustrated above, three (3) major points are observed:

- In a multi-vendor roll-out/implementation of a large scape ERP solution like SAP, changes to process occur frequently and often at whim with new project leadership. The unintended consequences of in-flight changes typically result in disjointed processes. In this case, traceability between change requests and original requirements is now lost. The downstream impact is that the project test manager cannot effectively scope out the regression testing cycles.

- There is often “blind trust” in the manual processes and techniques used by colleagues on large-scale platform implementations and product development. Nobody is quite sure what the others are doing, but they assume that all bases are covered because they were led to believe this during project team meetings. Adopting a Value Stream Management approach to ERP roll-outs and software product development can provide the necessary end-to-end visibility to illuminate bottlenecks, breakdowns, and inefficiencies.

- Nearsightedness can have long-term consequences in both a product-oriented software development environment and larger scale ERP roll outs. In the example above, you will notice that our customer is about 1.5 years out from releasing the Minimum Viable Product (MVP) to the marketplace. Therefore, they have not given much consideration to the post go-live maintenance and support strategy of their software application. Without a long view of their product’s survivability, they overlook inadequate processes (e.g., change management) that seemingly don’t have a negative impact today, but will haunt them in the near future and over the long-term.

Steps to Optimize and Prepare for Long-term Success

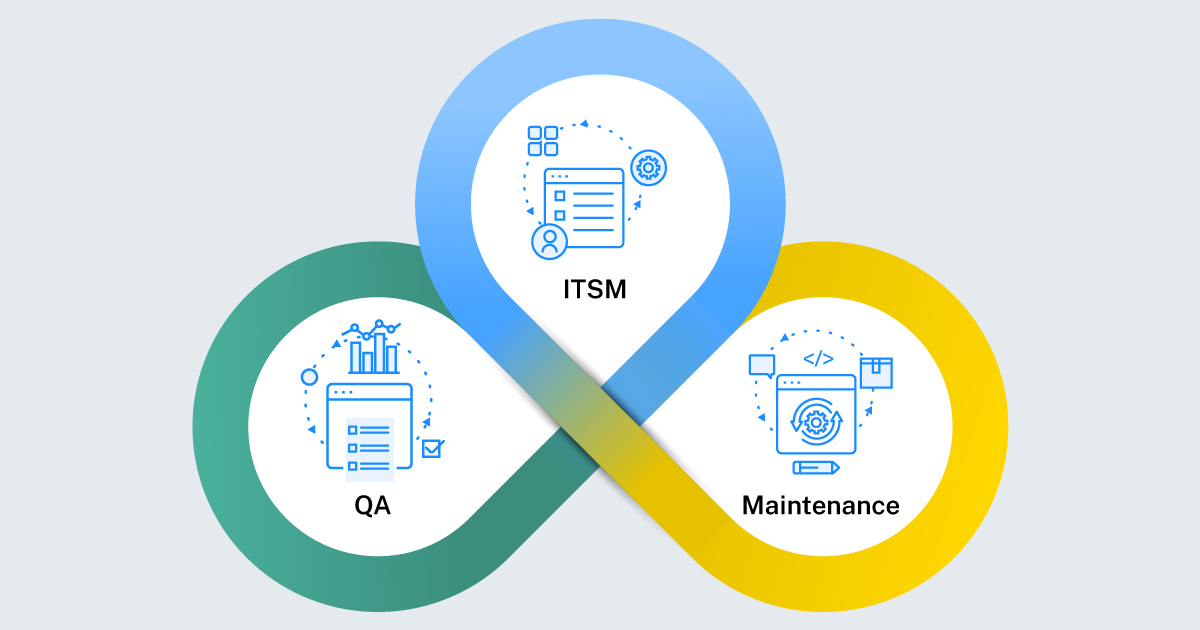

In today’s digital world, we have heard a lot about how value stream management can help accelerate software delivery and improve quality. Most of the thought leadership presentations circulating are high-level, without much in the way of “how” to start implementing value stream management principles. Given the brief scenario shared in this blog article, let us walk you through the best practices of how you can optimize the “triad” – a common problem that organizations face between:

- IT Service Management

- Quality Assurance/Testing

- Long-term Maintenance

These three (3) areas of your software development lifecycle have more touchpoints than most people realize. Our example above illustrates how an in-flight change of process by a new project manager can result in long-term negative consequences to product quality. A Value Stream Leader, whether appointed or emergent, is someone in your organization who has the foresight to provide a “forest through the trees” look at your overall environment (e.g., a Value Stream Visualization), with the experience to articulate the unintended long-term consequences of not making the necessary changes and/or improvement today. Let us look at your IT Service Management discipline first.

IT Service Management (ITSM)

Change management is a broad term in software circles. It often falls under the ITSM area in most organizations. However, change management is also a function of the product/project team prior to the release of an MVP or a large-scale platform like SAP. Changes to your code base are not only the result of customer feedback, but also modifications to commercial off-the-shelve (COTS) software packages like Oracle or SAP. These CRs (change requests) occur because of an extensive Fit/Gap analysis conducted by your process leaders and subject matter experts (SMEs).

In our opinion, it is of critical importance to appoint a Value Stream Leader to define your approach to change management and how it relates to both quality assurance and long-term maintenance (Post Go-Live Planning). The specifics about how your teams will manage post go-live bug fixes, changes, and incidents may not be necessary at the onset of the project, but what is necessary is to have a generalized process/framework laid out from the jump. This should include:

- Where will your change requests be logged? What tool?

- How will your change requests will be traced/linked back to the originating requirement?

- How will you analyze and determine the impact of changes to originating requirements? Will it be a tool-driven change impact analysis approach using products like Panaya Change Impact Analyzer, or will you adopt a qualitative SME-based review of all changes to determine impact?

- Who will champion the coordination of changes with your Quality Assurance Leader to help properly identify regression test scope? How often will that occur?

- Who is responsible for approving changes?

Both general and specific questions like these need to be considered and answered very early in the software development lifecycle to ensure traceability is created and maintained from the start. As the product evolves in feature and functionality, it becomes increasingly important to maintain discipline and follow the process/framework that you laid out for change management.

Quality Assurance and Testing

Quality Assurance (QA) is also a broad term in software circles. It often falls under Software Testing Leadership in most organizations. However, the function of QA spans the entire product value stream. From requirements intake, all the way through release to production, and back up through the product value stream for incidents and changes – QA touches every aspect of your product value stream. Realizing and understanding that QA is everyone’s responsibility can help drive a sense of urgency to ensure that process is maintained as it relates to traceability.

As an example, if your Solutions Leader doesn’t subscribe to the philosophy of “Quality is everyone’s responsibility” – they may not be inclined to verify that all changes are linked to the original requirements, or that each requirement has an associated test created per the standards set forth by your test leadership team. To properly scope out a regression test suite, an effective test manager must be able to understand the potential ripple effect of a change in one area to how it might impact existing functionality in other areas. For example, a slight modification to an IDOC responsible for processing orders entered in SAP CRM can result in a cascade of failures to process into SAP ECC.

This cascade of failures may result in delayed shipments, customer dissatisfaction, and ultimately lost revenue opportunities. It’s a ripple effect of negative consequences difficult to fully and accurately quantify – but all starts with ensuring discipline is maintained among your project team to enforce traceability. We believe that a Value Stream Leader can champion and remind everyone on a product or project team about Deming’s motto of “Quality is everyone’s responsibility.”

Long-term Software Maintenance

The phrase “start with the end in mind” is something coined by the late Stephen Covey, author of the world-famous book entitled 7 Habits of Highly Effective People. In today’s modern digital age, we are constantly pressured to deliver software faster, faster, and faster. As the need for speed is continuously applied to product teams by the business, it’s natural to overlook the long-term consequences in favor of pleasing whomever is breathing down your neck at the given moment. Although I’s natural to favor the short-term over the long-term, a Value Stream Leader is disciplined in their approach to implementing value stream management by starting with the end in mind. Ask yourself these questions:

- Do your existing ITSM and QA processes transfer to a post go-live environment? If not, what steps can you take immediately to ensure end-to-end traceability is preserved during product development and afterwards? As outlined above, lack of traceability can spell disaster for your test coverage efforts – leading to spikes in leaked defects and critical incidents.

- What metrics are you monitoring today that are transferrable to a post go-live environment? For example, are you monitoring Defect Detection Percentage (DDP) between testing environments (e.g., System to Regression)? Would this same metric also apply to your production environment?

- Will incidents created in your ITSM tool-of-choice (e.g., ZenDesk, ServiceNow) automatically synchronize to your bug tracking tools like Atlassian JIRA and Azure DevOps? If so, do you intend to use a marketplace add-in to integrate these tools, or a broader value stream integration platform like ConnectAll? If a change is required (rather than a bug fix), where will the change be logged? Within your Agile Planning tool (like Azure DevOps), in a SharePoint list, or in your test management tool like Micro Focus ALM? And more importantly, how will the change be linked to the original requirement, and to the associated tests needed to properly regression test existing functionality?

Summary

Architecting your value stream for speed and quality does not happen by accident.

The Value Stream Triad does not prescribe a specific set of tools, but it does call for a need to consider the touch points between three important components of your software delivery lifecycle: Change Management, Quality Assurance, and Maintenance. Your touchpoints should include a variety of considerations, including:

- People – How disciplined is your staff in maintaining a “Quality is Everyone’s Responsibility” mindset? Would it benefit you to appoint a Value Stream Leader to work across every functional area in your software value stream? Do you have the right leaders in place? Are you verifying assumptions to ensure adequate test coverage for every release cycle/sprint?

- Process – Are you starting with the end in mind? Have you considered how your team will maintain that new software product after it is in the hands of existing and new customers? Have you created a maintenance strategy with reference to your master test strategy and change management strategy? Although Agile preaches less documentation and more people, it is vital to think through your critical processes and document them accordingly. Your Value Stream Leader can help remind, champion, and enforce process across your value stream. They might not necessarily own the outcomes of the process, but can help facilitate and shepherd the activities of each process, including the discipline of traceability.

- Technology – Are you managing software changes in a vacuum like SharePoint without an automated tie-in to the system where your original design documents were created and stored? Are you managing your manual tests in a different system than requirements, changes, or defects? A Value Stream Leader can help identify the tools in your value stream and recommend an integration strategy that compliments optimal processes and people utilization. For example, there is technology like ConnectALL that can automate the synchronization of a change request from an ITSM solution to a test management solution. From there, you can coach your team to link changes to the original requirements, and also check and confirm that each and every requirement has adequate test coverage.

By intentionally designing your product value stream, you can prevent future issues from occurring. Similar to exercise, eating healthy, and managing your stress – treating your value stream like the human condition can lead to better business outcomes. Establishing preventative processes, coaching your people, and adopting the right technology to drive speed and quality is an intentional activity – not something that happens by accident.At ConnectALL, we understand that every value stream is different. That’s why we offer a breadth of tailored services that align with your unique requirements. Learn more about our menu of value stream optimization services here: https://www.connectall.com/services/

Johnathan McGowan is a Sr Solutions Architect at ConnectALL. He is responsible for customer-facing technical resource for the ConnectALL integration tool. He works with Account Managers to assess prospect needs and build demo integration solutions, guide prospects through product evaluations, and assist clients with their production deployments.